|

Towards Next Generation User Interfaces and Videoconferencing Systems

Dmitry Zotkin, Ramani Duraiswami, Larry S. Davis

|

|

See other PIRL

Publications

Created On : 03-16-2001

Perceptive Interface and Reality Lab

Introduction

- Moore’s law

- Computing power is increasing

- Computers are becoming more widespread

- Used for new cool things such as Virtual Reality

- Yet … human-computer interaction is still done using a keyboard and a mouse

- There is a need for better, unencumbered HCI

- The computer must

- Understand Speech and gesture commands

- Track the user reliably and focus on him/her to capture audio and video modalities of interaction

- Track the user’s pose and adjust experience for Virtual Reality and Augmented Reality applications

- Our research is aimed at locating and capturing a moving user’s

gestures and voice.

TOP

Multimodal tracking algorithms

- Goal: Combine tracking of users from both video and audio data

- Helps track through occlusions and achieves more robust tracking

- Uses the ‘CONDENSATION’ tracking framework

- The directly unobservable object state is derived statistically from

available measurements which depend on the state (position, color, shape,

etc.)

- Our multi-modal tracker uses both audio and video measurements

- Applied in experiment to track flight path of a flying bat while capturing prey.

TOP

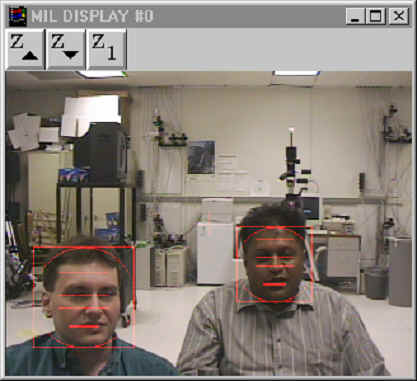

Videoconferencing and smart VC

- Unattended “smart” videoconferencing

- Send most informative shot and noise free voice data to remote site using the computer as a cameraperson

- Requires similar multimodal algorithms

- Our experimental system

- Two cameras, two microphone arrays

- Operates in real-time on a PC

- Combined audio-video tracking of a speaker

- Sound enhancement for speech recognition

- Smart camera switching

- Example with two people in the picture

TOP

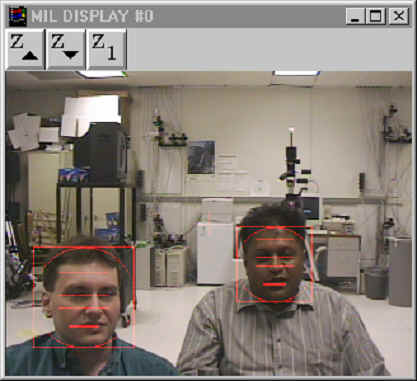

Occlusion handling and performance

- In the framework of CONDENSATION tracker, it is possible to use partial measurements

- Essentially, occlusion handling is done for free

- E.g. if the subject is occluded in one camera

- Or the audio cross-correlation is not reliable

- In these cases, tracking is continued with available measurements only

- The picture on the right shows a track in a VC setup with simulated one camera occlusion

- The performance of the tracker is sufficient for real-time operations. To improve the tracking accuracy, quasi-random sampling is implemented.

TOP

Fast multiple source localization

- Acoustic source localization in reverberant rooms is hard due to noise

- Developed a novel robust algorithm that actively searches space for

sources.

- Search is made fast by using a dual coarse-to-fine hierarchical search in

both space and frequency. Results in a robust algorithm

|

Localization error (m) vs. signal-to-noise ratio (dB) for a conventional algorithm and our doubly-hierarchical algorithm

|

Energy map of 4 sound sources in a room

|

TOP

HRTF project and video tracking

- A novel big project: create Virtual Auditory Spaces

- Humans use cues arising from ear and head shape to localize sound sources

- Cues change with the position and pose of the user

- Project goal is to synthesize these cues by fast numerical computations

- Demonstration: Render virtual audio scene for the user through headphones

- To keep the rendered scene stable, low-latency tracking of the position and orientation is required

- Multiple-camera setup to track the position in 3D

- This work is ongoing.

TOP

![]()